Post-Market Monitoring (PMM) for AI Act Compliance

AI safety and compliance does not stop at launch. Post-market monitoring is a legal obligation for AI providers and deployers in the EU. AIRTA Systems delivers it as a complete managed service: a consumer reporting portal, oversight logs, incident-ready workflows, and audit-ready evidence. All in one place.

Request a DemoThe PMM obligation in plain English

The EU AI Act and Product Liability Directive do not just ask "is your AI safe today?" — they ask "how do you know, continuously, and can you prove it?"

Regulators expect you to actively watch for problems in production, not wait for something to go wrong. If a consumer is harmed by your AI, you face potential fines of up to €35M or 7% of global turnover. Your defence is a documented history of due diligence.

Documentation written before launch is not enough. You need a continuous record of real-world monitoring, user-reported issues, and what you did about them. That is what PMM provides.

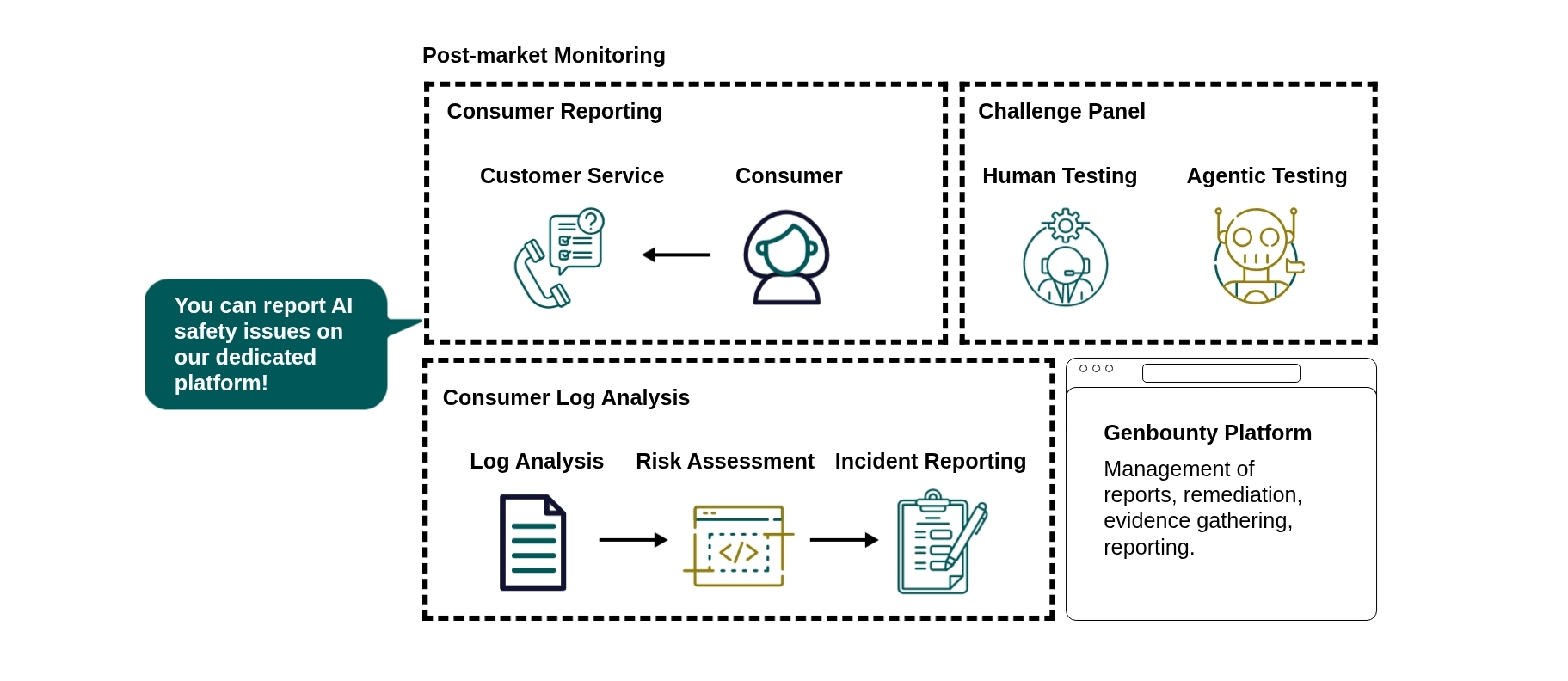

How the PMM pipeline works

AIRTA Systems PMM runs three continuous streams simultaneously. Each stream generates its own evidence. Together, they produce a complete, exportable compliance package — because with AIRTA Systems, compliance is the product.

Stream 1 — Consumer Reporting

Consumers report AI safety issues directly through your public AIRTA Systems program. But this is not a one-way ticket box — it is a managed resolution process. The consumer and your team communicate through the platform until the issue is resolved to a satisfying outcome.

Every message, update, and action in that thread is recorded, timestamped, and exportable. The full incident — from first report to final resolution — is packaged as evidence. This is the consumer feedback loop required by the Product Liability Directive, documented end-to-end.

Stream 2 — Consumer Log Analysis

Incoming consumer logs are continuously processed through a structured pipeline: log analysis → risk assessment → incident reporting.

Patterns across submissions are surfaced automatically. Each issue is mapped to EU AI Act risk classifications and assessed for severity and regulatory relevance. Confirmed incidents are escalated with structured workflows. Every step — triage, assessment, escalation — is logged and packaged as evidence, ready to export for regulators, legal teams, or due diligence.

Stream 3 — Challenge Panel

This stream does not wait for consumers to find problems. The Challenge Panel runs proactive, continuous testing of your AI application using both human testers — reviewers probing for real-world failure modes — and agentic testing, AIRTA Systems's proprietary LLM-driven suite simulating adversarial inputs, prompt injection, hallucinations, and misuse scenarios at scale.

Every finding is recorded and exportable. Problems get caught and documented before they reach your users — turning proactive testing into part of your compliance evidence.

What you are protected against

Regulatory Exposure

- Meet the EU AI Act's mandatory post-market monitoring obligation with a documented, continuous program.

- Satisfy the Product Liability Directive's consumer feedback loop requirement.

- Demonstrate human oversight and due diligence — not just intent.

Legal and Litigation Risk

- Build an immutable timeline of safety activity that shifts the burden of proof in your favour.

- Every finding, fix, and oversight action is timestamped and traceable.

- Export structured evidence for legal review, due diligence, and regulatory submission.

Reputational and Operational Risk

- Detect serious incidents and unsafe behaviour before they escalate.

- Give consumers a direct channel to report problems — demonstrating you take safety seriously.

- Connect exports to your ticketing, SIEM, GRC, or data lake with minimal friction.

Every incident without PMM is an incident without a defence

Q4 2026 is not a soft deadline. Regulators will ask for evidence of continuous monitoring — not a policy document written at launch. Every day your AI is live without PMM is a day your evidence trail has a gap.

Activating takes two minutes. Your AI application gets a live consumer reporting portal, your team gets structured incident workflows, and you start generating a compliance evidence package immediately. When a regulator asks for proof — or a lawyer asks what you did about reported issues — you have a complete, exportable record.

Ready for the full picture?

Move from PMM to complete, continuous EU AI Act compliance — testing, documentation, monitoring, and certification through one platform. Mandatory compliance begins Q4 2026.

See the Full Platform